Implementing branch previews in Google Cloud Platform

Contents

-

Why branch previews are essential in software development

-

Branch previews implementation through Github Actions with Google Cloud Platform

-

Clean up

Why Branch Previews Are Essential in Software Development

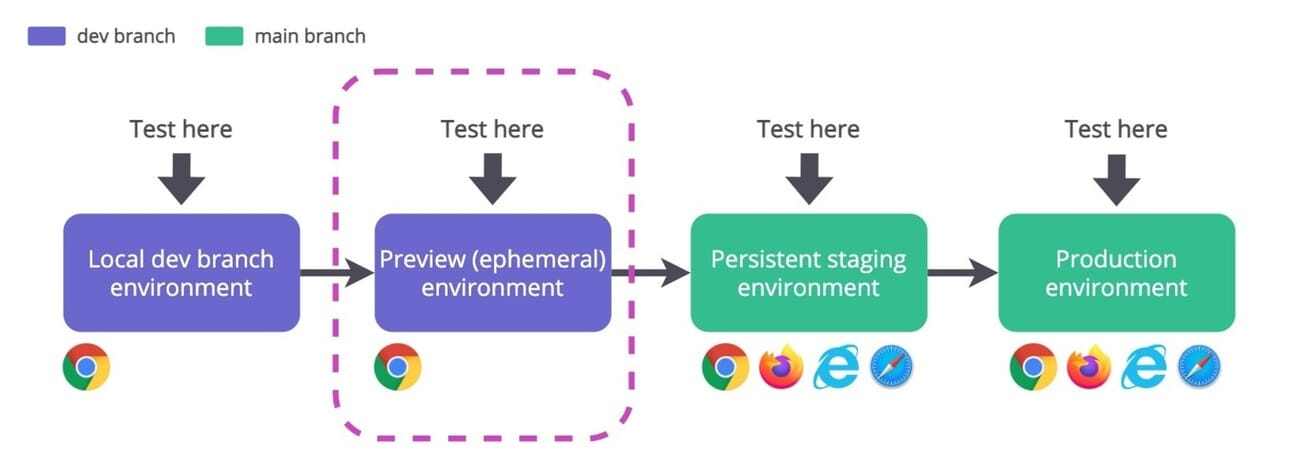

Every developer knows that writing code is only one part of the software development process. An equally important step is testing the changes to ensure they work as expected and do not introduce bugs into the existing system. This is where branch previews come into play. They provide a staging ground where developers can deploy, test, and preview changes in isolation from the main codebase, ensuring a smooth and bug-free production environment.

What are Branch Previews?

In development settings where multiple contributors are frequently pushing new code, keeping track of and testing these updates can become quite challenging. Branch previews significantly simplify this process. They allow for the creation of temporary environments where code from different branches can be deployed, tested, and previewed before it's merged into the main branch.

Why are Branch Previews So Useful?

1. Isolated Testing Environment:

Branch previews allow developers to test their code in an environment identical to production. It isolates the new code from the stable production environment. This means it's possible to identify and fix issues before they affect the pre-existing system.

2. Better Collaboration:

Team members can share the unique URL of the branch preview with colleagues and stakeholders. This allows for interactive feedback, fostering seamless collaboration whether it's tweaking the UI components, fixing bugs, or improving workflow.

3. Detection of Integration Issues Early:

Branch previews let developers test how new code interacts with the existing codebase, providing a chance to rectify any integration issues. By catching these potential problems early (before merging), teams can ensure a more seamless integration process when the new code is finally pushed into the main branch.

4. Quality Assurance:

By testing the new branches in a secure environment, teams can assure the quality of their software. This additional measure helps teams maintain a robust, error-free codebase.

5. Continuous Deployment & Integration:

With the concept of DevOps and CI/CD becoming standard practices in software development, branch previews form a vital part of this lifecycle. The ability to preview changes in a separate yet identical environment aids the CI/CD process by ensuring any changes won't break the production environment.

Branch Previews - A Software Development Boon

To sum up, branch previews are not just useful but crucial in the modern software development lifecycle. By providing developers with a platform where proposed changes can be tested in isolation, we're stepping towards risk-free, quality software development.

Branch previews foster collaboration, increase code quality, and drive an efficient CI/CD workflow, making them a must-have feature for development teams. As a developer, if you're not yet utilizing branch previews, you're missing out on an element that could significantly streamline your development process.

Branch previews implementation through Github Actions with GCP

Google provides a great tutorial to implement branch previews with their Cloud Build service. You can follow this tutorial and see if it fit your requirements. However, after going through this tutorial myself I realized that I needed to have more customization and that it would be better to integrate the branch deployment feature my organizations current Github workflow instead of using a cloudbuild file.

Requirements :

-

A Google Cloud service account with these permissions (More information on service accounts here)

-

Cloud Run Admin

-

Artifact Registry Administrator

-

A Github secret containing the service account access credentials in JSON (More information on how to create access credentials here)

-

An Artifact Registry repository (More information on repository creation here)

-

Knowing how to containerize your app with Docker

Services that we will be using for branch deployment :

-

Cloud Run

-

Artifact Registry

-

Cloud SQL (Bonus)

Variables :

-

LOCATION : Region where the service is located, list of regions is available here

-

IMAGE_NAME : Name of the image you built with docker compose as specified in your docker-compose file

-

PROJECT_ID : ID of you GCP project found in cloud console

-

REPOSITORY : Name of your Artifact Registry repository

-

APP_NAME : Name of your app in the repository

-

NAMESPACE :Either your Github username or your organization

-

USERNAME : Your Github username

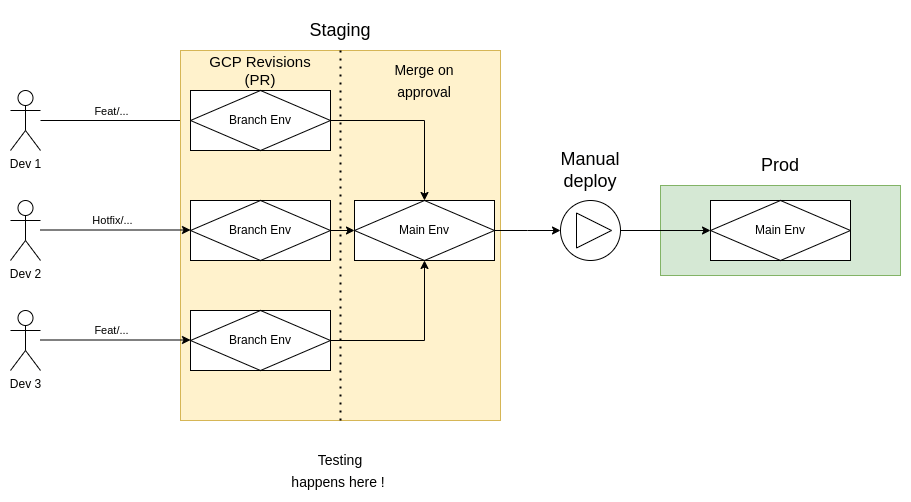

This is the end result development flow, each square with a diamond inside represents a deployment.

In this tutorial we will focus on the staging part of the development flow.

Workflow configuration

env:

TAG: pr-$ # This is to identify revisions

DOCKER_BUILDKIT: 1

COMPOSE_DOCKER_CLI_BUILD: 1

on:

pull_request:

branches:

- 'main'I’ve divided the branch preview workflow in 2 major jobs.

1. Configure and push your container to artifact registry

The first job consists of building the docker image of your application and pushing it to the Artifact Registry

Here the GCP_CREDENTIALS secret is your service account credentials.

configure_image:

environment: staging

runs-on: ubuntu-latest

steps:

- name: Checkout

uses: actions/checkout@v3

- id: auth

uses: google-github-actions/auth@v1

with:

credentials_json: $ # Service account with enough permissions

- name: Set up Cloud SDK # Necessary because we're using the gcp cli

uses: google-github-actions/setup-gcloud@v1

- name: Build Docker image

run: docker compose -f docker-compose.yaml build

- name: Tag and Push Container

run: |

gcloud auth configure-docker LOCATION-docker.pkg.dev

docker tag IMAGE_NAME LOCATION-docker.pkg.dev/PROJECT-ID/REPOSITORY/APP_NAME:$

docker push LOCATION-docker.pkg.dev/PROJECT_ID/REPOSITORY/APP_NAME:$2. Deploy to Cloud Run

deploy:

environment: staging

runs-on: ubuntu-latest

needs: configure_image

steps:

- id: auth

uses: google-github-actions/auth@v1

with:

credentials_json: $ # Service account with enough permissions

- name: Deploy to Cloud Run

id: deploy

uses: google-github-actions/deploy-cloudrun@v1

with:

service: SERVICE_NAME

image: LOCATION-docker.pkg.dev/PROJECT_ID/REPOSITORY/APP_NAME:$

region: LOCATION

tag: $

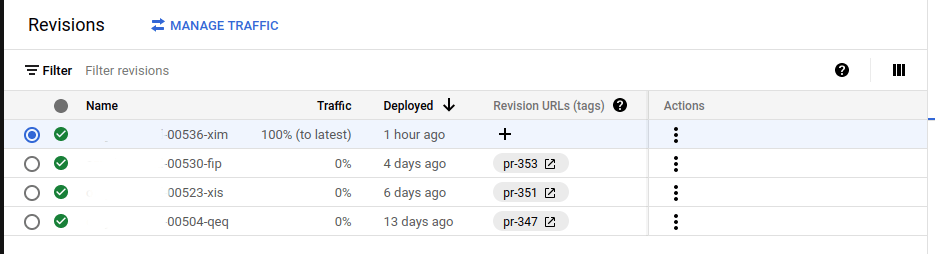

no_traffic: true # We dont want traffic on the created revision This is the result in the “Revisions” tab in the Cloud Run service. Each revision is a PR. You can preview your branches when clicking on the tag of the revision. For example, this is a staging Cloud Run service with 4 PR that are open and are in the process of being reviewed and/or tested.

Note that every new commit on the branch in preview will create a new revision with the most up to date commit and disabled the revision of the last commit which is very handy.

Bonus: Preview your branch directly in Github

Sometimes it is useful for the reviewers to see the changes visually so, in order to facilitate this, you can add a link to open your revision URL from Github directly.

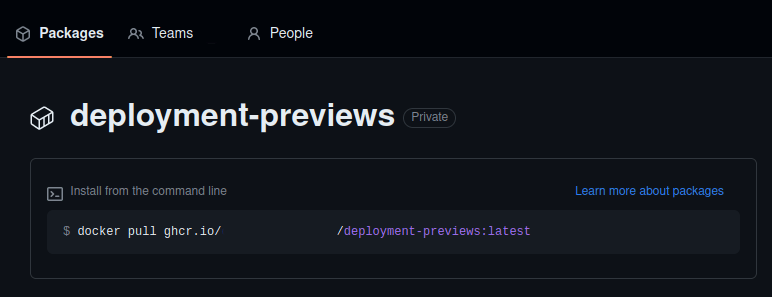

1. First you need to add the deployment preview custom cloud builder from GCP to your association’s Github package

This requires a token with the write:package permission and some configuration of docker. These are the basic commands needed to push the deployment previews images from Google to your

git clone <https://github.com/GoogleCloudPlatform/python-docs-samples>

cd python-docs-samples/run/deployment-previews

docker build .

docker push ghcr.io/ORGANIZATION/IMAGE_NAME: latest

git clone https://github.com/GoogleCloudPlatfor/python-docs-samples

cd python-docs-samples/run/deployment-previews

docker build .

docker push ghcr.io/ORGANIZATION/IMAGE_NAME: latest

More information on this process can be found in Github’s official docs and in this handy tutorial

Upon successfully uploading the image to Github, you should now have a new package in the "package" tab.

2. You can now use the package in your Github workflows

preview:

needs: deploy

environment: staging

runs-on: ubuntu-latest

container: # Using the ghcr.io image that we pushed

image: ghcr.io/NAMESPACE/deployment-previews

credentials:

username: USERNAME # Username associated with github token

password: $ # Repo github token

steps:

- id: auth

uses: google-github-actions/auth@v1

with:

credentials_json: $

create_credentials_file: true # Required for the check_status.py file

- name: Setup Cloud SDK

uses: google-github-actions/setup-gcloud@v1.1.1

with:

project_id: PROJECT_ID # Project id from GCP

- name: Link revision on pull request

run: |

python3 /app/check_status.py set --project-id PROJECT_ID \

--region LOCATION \

--service APP_NAME \

--pull-request $ \

--repo-name $ \

--commit-sha $Once all you check passes you should now have this new banner upon clicking the "Show all checks" button towards the end of the PR page.

Upon clicking the “Details” link you should be redirected to your branch preview URL. Keep in mind that all the revisions are using the same database.

Bonus 2 : Link a new database Cloud SQL instance to your revision

Sometimes your don’t want your feature branch to directly impact the staging database, let’s say your modifying or adding a new entity and you don’t want to add bad data by mistake into the staging database. In order to avoid this, I created a some steps to clone the staging database when the developers requests it by adding the "needs_db" keyword in the PR title.

1. Add this step to the configure_image job

- name: Clone Cloud SQL instance

id: clone_sql_instance

if: contains(github.event.pull_request.title, 'needs_db')

run: |

INSTANCE_NAME="instance_name"

CLONE_NAME="instance_name-pr-$"

gcloud sql instances clone $INSTANCE_NAME $CLONE_NAME

continue-on-error: trueThis step clones the project’s Cloud SQL instance named “instance_name” if the title of the PR contains the word “needs_db” this is for me the easiest solution and if the developers forgot to write the keyword on his initial PR he can always go back and modify the name so that the Cloud SQL instance is cloned. The cloned instance is then named with the PR number to easily find which database is linked with which PR.

2. If applicable, update your environment files so that they point to your new database IP Address

You can get your new instance’s IP address with this step. The if statement refers to the Clone Cloud SQL instance step that's why we need to give it an id. This step also goes into the configure_image job.

- name: Update database url

if: contains(github.event.pull_request.title, 'needs_db') && steps.clone_sql_instance.conclusion == 'success'

run: |

DB_PRIVATE_IP=$(gcloud sql instances describe instance_name-pr-$ --format='get(ipAddresses[2].ipAddress)')

# Update .env files hereNow you should have a new database instance linked to your revision, this new database will be in your SQL service in GCP.

Clean up

Now that you have plenty of revisions and database instances you need to clean them up after your PR gets approved and you merge into the main branch.

I usually run my clean up in a different workflow file that is executed on push onto the main branch.

env:

DOCKER_BUILDKIT: 1

COMPOSE_DOCKER_CLI_BUILD: 1

# This is to speed up docker compose

on:

push:

branches:

- 'main'1. Clean up the tags using the check_status file provided by the Google deployment previews image.

If you haven’t added the image to your association package registry yet you can follow the beginning of the "Preview your branch directly in Github" bonus step that we covered earlier in this blog.

clean_up_tags:

needs: deploy

environment: staging

runs-on: ubuntu-latest

container: # Using the

image: ghcr.io/ASSOCIATION_NAME/deployment-previews

credentials:

username: USERNAME #Username associated with github token

password: $ #Repo github token

steps:

- id: auth

uses: google-github-actions/auth@v1

with:

credentials_json: $

create_credentials_file: true #required for the check_status.py file

- name: Setup Cloud SDK

uses: google-github-actions/setup-gcloud@v1.1.1

with:

project_id: PROJECT_ID # Project id from GCP

- name: Clean up old tag

run: |

python3 /app/check_status.py cleanup --project-id PROJECT_ID \

--region LOCATION \

--service $Cleaning up tags is important because it will disable the branch previews that have been merged thus making the clean up script of the next step simpler.

2. Remove old images and merged revisions with a custom script

Through my research I've not found an easy way to delete unused revisions and related images in Artifact Registry so I created my own clean up script in bash.

clean_up_revisions_and_artifacts:

needs: clean_up_tags

environment: staging

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Login

uses: google-github-actions/auth@v1

with:

credentials_json: $

- name: Fix permissions

run: chmod 700 ./gcloud_scripts/clean_up.sh

- name: Run clean up script

run: ./gcloud_scripts/clean_up.sh -r LOCATION -s APP_NAME -p PROJECT_ID

shell: bashThe script :

#!/bin/bash

while getopts r:s:p: flag # Flag handler

do

case "${flag}" in

r) region=${OPTARG};;

s) service=${OPTARG};;

p) project_id=${OPTARG};;

*)

esac

done

# Clean revisions

for rev in $(gcloud run revisions list --region="$region" --service="$service" --filter="status.conditions.type:Active AND status.conditions.status:'False'" --format='value(metadata.name)') # Fetch all the disabled revisions

do

echo "Deleting revision : $rev"

gcloud run revisions delete "$rev" --region="$region" --quiet # Quiet flag is necessary

done

# Clean artifact registry

active_images_digest=()

# Fetch all the revisions that are not retired

for active_revisions in $(gcloud run revisions list --region="$region" --service="$service" --filter="-status.conditions.reason:Retired" --format='value(metadata.name)')

# Make and Array of the image digest of active revisions

do

active_image=$(gcloud run revisions describe "$active_revisions" --region="$region" --format='value(image)')

active_image=${active_image:89:160}

echo "Active image : $active_image"

active_images_digest+=("$active_image")

done

# List images in Artifact Registry

for digest in $(gcloud artifacts docker images list "$region"-docker.pkg.dev/"$project_id"/shopify-backend/"$service" --format='value(version)')

do

# Delete images that are not in the Array of active images digest

if [[ ! " ${active_images_digest[*]} " =~ ${digest} ]]; then

echo "Digest to delete : $digest"

gcloud artifacts docker images delete "$region"-docker.pkg.dev/"$project_id"/shopify-backend/"$service"@"$digest" --delete-tags

fi

doneBonus : Database clean up

If you decided to implement the database instance duplication to your workflow here is the step to delete it once the PR is merged into the main branch. It includes little tricks to find the PR title and number of the current commit on which the workflow is ran on. We need the PR title to check if the commit is part of a PR that had the 'needs_db' keyword.

teardown_pr_db:

environment: staging

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Login

uses: google-github-actions/auth@v1

with:

credentials_json: $

- name: Find PR title

run: |

PR_TITLE=$(gh pr list --search $ --state merged --json title --jq '.[0].title')

echo "PR_TITLE<<EOF" >> $GITHUB_ENV

echo $PR_TITLE >> $GITHUB_ENV

echo "EOF" >> $GITHUB_ENV

- name: Find PR number

if: contains(env.PR_TITLE, 'needs_db')

run: |

PR_NUMBER=$(gh pr list --search $ --state merged --json number --jq '.[0].number')

echo "PR_NUMBER<<EOF" >> $GITHUB_ENV

echo $PR_NUMBER >> $GITHUB_ENV

echo "EOF" >> $GITHUB_ENV

- name: Remove deletion protection

if: contains(env.PR_TITLE, 'needs_db')

run: gcloud sql instances patch instance_name-pr-$ --no-deletion-protection

- name: Delete database

if: contains(env.PR_TITLE, 'needs_db')

run: gcloud sql instances delete instance_name-api-pr-$Done!

Voilà, you now have implemented a really good development flow into your personal or organization projects. I know this is lot to take in so don't hesitate to leave comments on this blog to help improve it or if you have any questions!